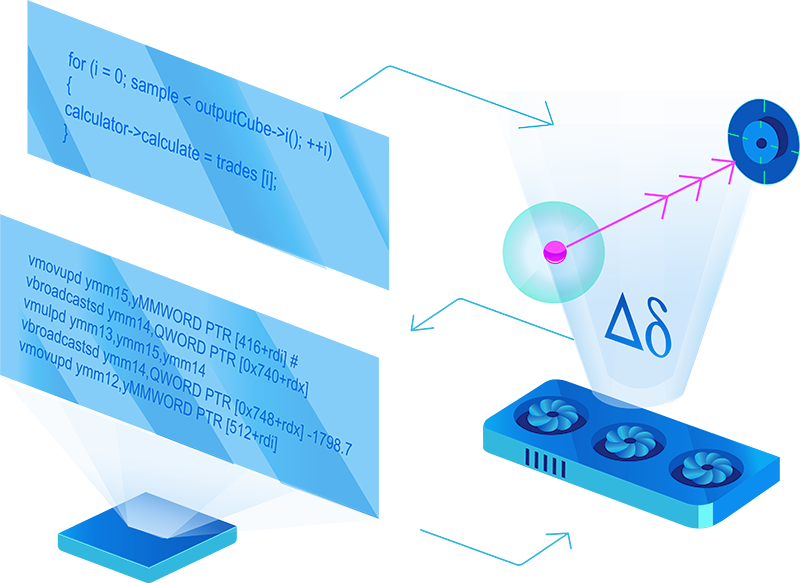

Modern compilers for object-oriented languages are not optimized for calculation-intensive tasks. Extensive use of abstraction and virtual functions allows developing an easy-to-read code but the performance penalty is high.

Writing high-performing vectorized and multi-thread safe code is a tedious and time-consuming task, while the result is usually hard to maintain. MatLogica helps developers to focus on adding value, taking care of performance.

Our Solutions

Accelerator

MatLogica’s accelerator utilizes native CPU vectorization and multi-threading, delivering performance comparable to a GPU. For problems such as Monte-Carlo simulations, historical analysis, and "what if" scenarios, the speed can be increased by 6-100x, depending on the original performance.

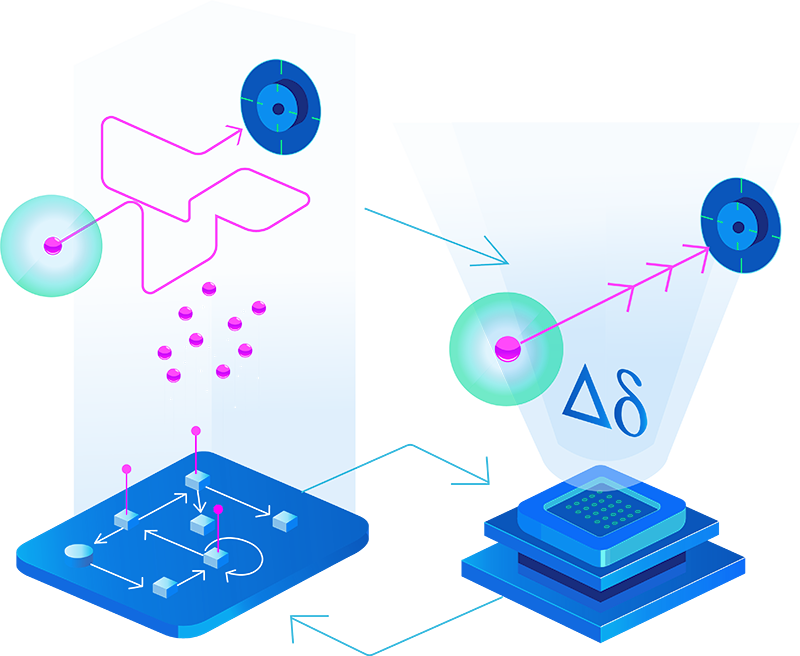

AADC Library

Calculating derivatives is essential to finance, machine learning, and numerous other scientific and engineering industries. Our innovative compiler can speed up the AAD method itself, and deliver a pricing and scenario analysis unattainable with competing products. MatLogica's approach enables AAD calculations in the legacy code, whereas alternative solutions require extensive effort and changing the source code.

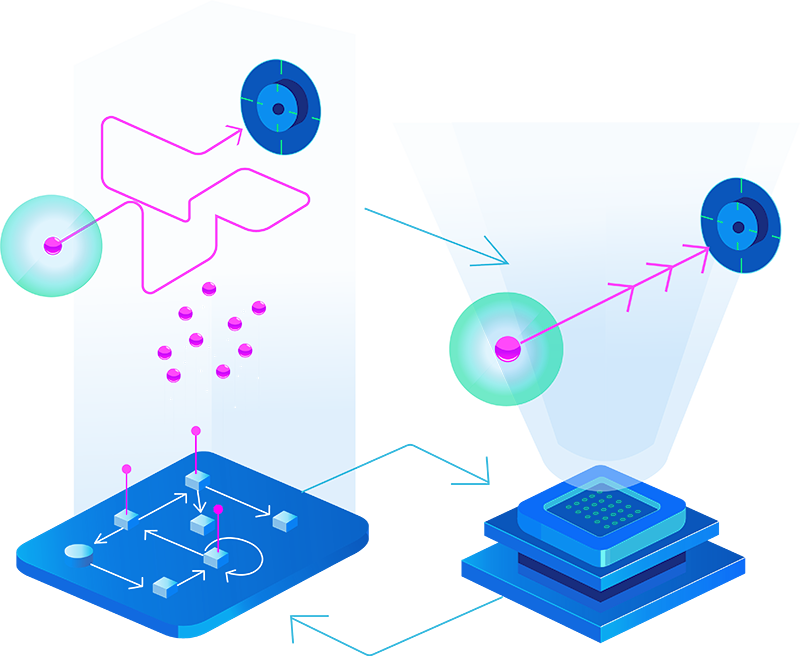

GPU

We don't currently support machine code generation for GPU backend. Instead, we can take existing analytics implemented for CUDA/GPU and run it on a scalable CPU taking advantage of AVX2/AVX512 vectorization, multiple cores, increased memory and AAD.